Style Change Detection 2021

Synopsis

- Task: Given a document, determine the number of authors and at which positions the author changes.

- Input: StackExchange questions and answers, combined into documents [data]

- Output: Whether a document has multiple authors, how many and where authorship changes [verifier]

- Evaluation: F1 [code]

- Submission: Deployment on TIRA [submit]

Task

The goal of the style change detection task is to identify text positions within a given multi-author document at which the author switches. Hence, a fundamental question is the following: If multiple authors together have written a text, can we find evidence for this fact, e.g., do we have a means to detect variations in the writing style? Answering this question belongs to the most difficult and most interesting challenges in author identification: Style change detection is the only means to detect plagiarism in a document if no comparison texts are given; likewise, style change detection can help to uncover gift authorships, to verify a claimed authorship, or to develop new technology for writing support.

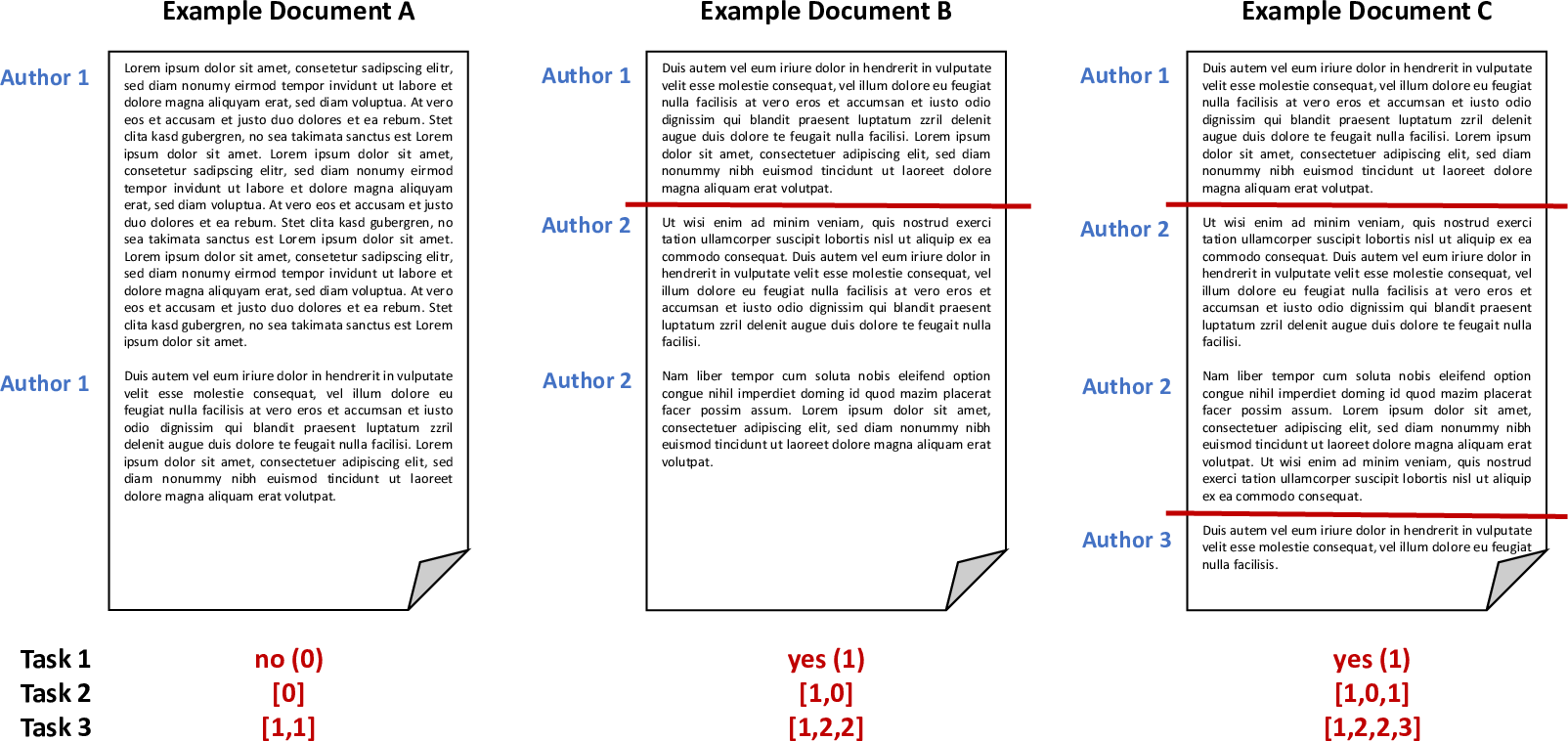

Previous editions of the Style Change Detection task aim at e.g., detecting whether a document is single- or multi-authored (2018), the actual number of authors within a document (2019) or whether there was a style change between two consecutive paragraphs (2020). Considering the promising results achieved by the submitted approaches, we aim to steer the task back to its original goal: detecting the exact position of authorship changes. Therefore, the task for PAN'21 is to first detect whether a document was authored by one or multiple authors, for two-author documents, the task is to find the position of the authorship change and for multi-author documents, the task is to find all positions of authorship changes.

Given a document, we ask participants to answer the following three questions:

- Single vs. Multiple. Given a text, find out whether the text is written by a single author or by multiple authors (task 1).

- Style Change Basic. Given a text written by two or more authors and that contains a number of style changes, find the position of the changes (task 2).

- Style Change Real-World. Given a text written by two or more authors, find all positions of writing style change, i.e., assign all paragraphs of the text uniquely to some author out of the number of authors you assume for the multi-author document (task 3).

All documents are provided in English and may contain an arbitrary number of style changes, resulting from at most five different authors. However, style changes may only occur between paragraphs (i.e., a single paragraph is always authored by a single author and does not contain any style changes).

The following figure illustrates some possible scenarios and the expected output for the tasks:

Data

To develop and then test your algorithms, a data set including ground truth information is provided, it contains texts from a relatively narrow set of subjects related to technology.

The dataset is split into three parts:

- training set: Contains 70% of the whole data set and includes ground truth data. Use this set to develop and train your models.

- validation set: Contains 15% of the whole data set and includes ground truth data. Use this set to evaluate and optimize your models.

- test set: Contains 15% of the whole data set. For the documents on the test set, you are not given ground truth data. This set is used for evaluation (see later).

You are free to use additional external data for training your models. However, we ask you to make the additional data utilized freely available under a suitable license.

Input Format

The dataset is based on user posts from various sites of the StackExchange network, covering different topics. We refer to each input problem (i.e., the document for which to detect style changes) by an ID, which is subsequently also used to identify the submitted solution to this input problem. We provide one folder for train, validation and test data.

For each problem instance X (i.e., each input document), two files are provided:

problem-X.txtcontains the actual text, where paragraphs are denoted by\n\n.truth-problem-X.jsoncontains the ground truth, i.e., the correct solution in JSON format:{ "authors": NUMBER_OF_AUTHORS, "site": SOURCE_SITE, "multi-author": RESULT_TASK1, "changes": RESULT_ARRAY_TASK2, "paragraph-authors": RESULT_ARRAY_TASK3 }The result for task 1 (key "multi-author") is a binary value (1 if the document is multi-authored, 0 if the document is single-authored). The result for task 2 (key "changes") is represented as an array, holding a binary for each pair of consecutive paragraphs within the document (0 if there was no style change, 1 if there was a style change). If the document is single-authored, the solution to task 2 is an array filled with 0s. For task 3 (key "paragraph-authors"), the result is the order of authors contained in the document (e.g.,

[1, 2, 1]for a two-author document), where the first author is "1", the second author appearing in the document is referred to as "2", etc. Furthermore, we provide the total number of authors and the Stackoverflow site the texts were extracted from (i.e., topic).An example of a multi-author document, where there was a style change between the third and fourth paragraph could look as follows (we only list the relevant key/value pairs here):

{ "multi-author": 1, "changes": [0,0,1,...], "paragraph-authors": [1,1,1,2,...] }A single-author document would have the following form (again, only listing the relevant key/value pairs):

{ "multi-author": 0, "changes": [0,0,0,...], "paragraph-authors": [1,1,1,...] }

Output Format

To evaluate the solutions for the tasks, the classification results have to be stored in a single file for each of the input documents. Please note that we require a solution file to be generated for each input problem. The data structure during the evaluation phase will be similar to that in the training phase, with the exception that the ground truth files are missing.

For each given problem problem-X.txt, your software should output the missing solution file

solution-problem-X.json, containing a JSON object with three properties, one for each task. The actual solution for task 1 is a binary value (0 or 1). For task 2, the solution is an array containing a binary value for each pair of consecutive paragraphs. For task 3, the solution is an array containing the author number (in order of appearance in the given document) for each paragraph.

An example solution file for a multi-authored document is featured in the following:

{

"multi-author": 1,

"changes": [0,0,1,...],

"paragraph-authors": [1,1,1,2,...]

}For a single-authored document the solution file may look as follows:

{

"multi-author": 0,

"changes": [0,0,0,...],

"paragraph-authors": [1,1,1,...]

}We provide you with a script to check the validity of the solution files [verifier and tests].

Evaluation

Submissions are evaluated by the F1-score measure for each document. The three tasks are evaluated independently based on the obtained accuracy measures. We compute the macro-averaged F1-score value across all documents.

We provide you with a script to compute those measures based on the produced output-files [evaluator and tests].

Submission

Once you finish tuning your approach on the validation set, your software will be tested on the test set. During the competition, the test set will not be released publicly. Instead, we ask you to submit your software for evaluation at our site as described below.

We ask you to prepare your software so that it can be executed via command line calls. The command shall take as input (i) an absolute path to the directory of the test corpus and (ii) an absolute path to an empty output directory:

mySoftware -i INPUT-DIRECTORY -o OUTPUT-DIRECTORYWithin INPUT-DIRECTORY, you will find the set of problem instances (i.e., problem-[id].txt files). For each problem instance you should produce the solution file solution-problem-[id].json in the OUTPUT-DIRECTORY. For instance, you read INPUT-DIRECTORY/problem-12.txt, process it and write your results to OUTPUT-DIRECTORY/solution-problem-12.json.

In general, this task follows PAN's software submission strategy described here.

Note: By submitting your software you retain full copyrights. You agree to grant us usage rights only for the purpose of the PAN competition. We agree not to share your software with a third party or use it for other purposes than the PAN competition.

Results

| Team | F1 | ||

|---|---|---|---|

| Task 1 | Task 2 | Task 3 | |

| Zhang et al. | 0.753 | 0.751 | 0.501 |

| Strom | 0.795 | 0.707 | 0.424 |

| Singh et al. | 0.634 | 0.657 | 0.432 |

| Deibel et al. | 0.621 | 0.669 | 0.263 |

| Nath | 0.704 | 0.647 | --- |

Related Work

- Style Change Detection, PAN@CLEF'20

- Style Change Detection, PAN@CLEF'19

- Style Change Detection, PAN@CLEF'18

- Style Breach Detection, PAN@CLEF'17

- PAN@CLEF'16 (Clustering by Authorship Within and Across Documents and Author Diarization section)

- J. Cardoso and R. Sousa. Measuring the performance of ordinal classification. International Journal of Pattern Recognition and Artificial Intelligence 25.08, pp. 1173-1195, 2011

- Benno Stein, Nedim Lipka and Peter Prettenhofer. Intrinsic Plagiarism Analysis. In Language Resources and Evaluation, Volume 45, Issue 1, pages 63-82, 2011.

- Efstathios Stamatatos. A Survey of Modern Authorship Attribution Methods. Journal of the American Society for Information Science and Technology, Volume 60, Issue 3, pages 538-556, March 2009.