Hyperpartisan News Detection 2019

Synopsis

- Task: Given a news article text, decide whether it follows a hyperpartisan argumentation, i.e., whether it exhibits blind, prejudiced, or unreasoning allegiance to one party, faction, cause, or person.

- Input: [data]

- Evaluation: [code]

- Submission: [submit]

- Baseline: [code]

Video

Data

The competition is over, but the data is still available here.

Evaluation

Main performance measure is accuracy on a balanced set of articles. In addition, we measure precision, recall, and F1-score for the hyperpartisan class. Please find the SemEval leaderboard here.

Ongoing Submissions

When you work on this task and publish your code on Github, send us a mail and we will add it to our list. Since the dataset is public as of December 2021, you are no longer required to use TIRA for accessing the dataset.

To kickstart new approaches, we provide a random baseline to illustrate the output of a submission and a term frequency extractor to illustrate how to read the dataset. For features, see the code from our ACL'18 publication for inspiration.

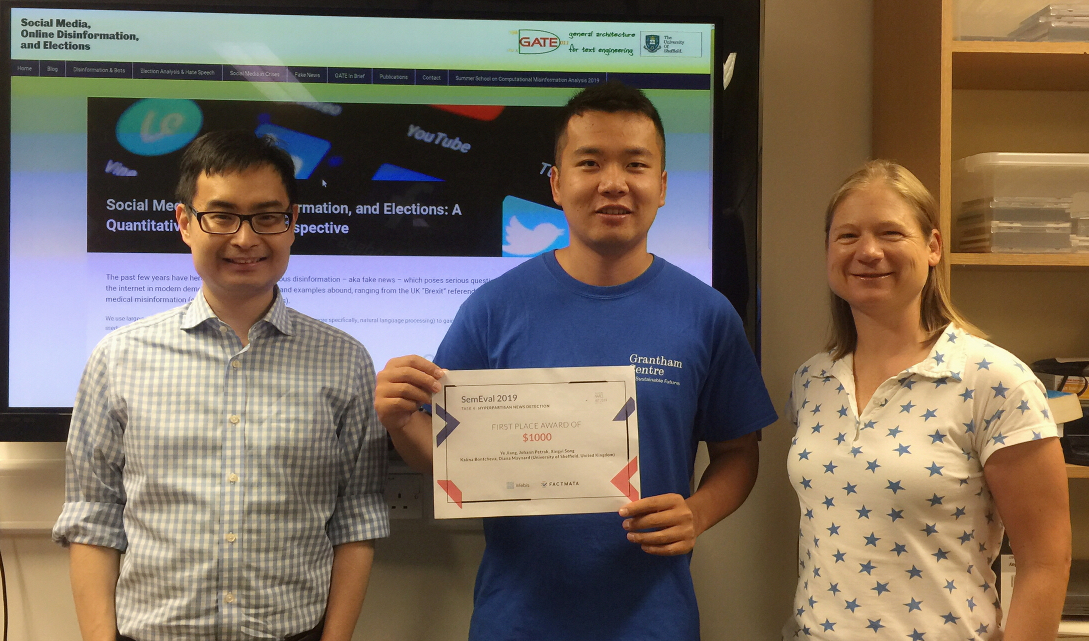

Grand Prize

In order to encourage developers to share their software so that everyone can profit from their work, we announced a grand prize of $1,000 to the best-performing submission that had its code published open source before the SemEval conference (which has been held at NAACL-HLT on June 6-7 in Minneapolis, USA).

The grand prize has been won by team bertha-von-suttner (Ye Jiang, Johann Petrak, Xingyi Song, Kalina Bontcheva, Diana Maynard from the University of Sheffield, United Kingdom). Well done!

Results

Main Leaderboard

| # | Team | Members | Accuracy | Precision | Recall | F1 | Code |

|---|---|---|---|---|---|---|---|

| 1 | bertha-von-suttner | Ye Jiang, Johann Petrak, Xingyi Song, Kalina Bontcheva, Diana Maynard (University of Sheffield, United Kingdom) | 0.822 | 0.871 | 0.755 | 0.809 | |

| 2 | vernon-fenwick | Vertika Srivastava, Yeon Hyang Kim, Divya Prakash, Ankita Gupta, Rohit R.R, Sudeep Kumar Sahoo (Samsung R&D Institute India-Bangalore) | 0.820 | 0.815 | 0.828 | 0.821 | |

| 3 | sally-smedley | Shota Sasaki1, Kazuaki Hanawa1, Tsubasa Tagami1, Hiroki Ouchi1,2, Jun Suzuki1,2, Kentaro Inui1,2 (1Tohoku University / 2RIKEN AIP, Japan) | 0.809 | 0.823 | 0.787 | 0.805 | |

| 4 | tom-jumbo-grumbo | Chia-Lun Yeh, Nava Tintarev (TU Delft, Netherlands), Babak Loni, Anne Schuth (De Persgroep) | 0.806 | 0.858 | 0.732 | 0.790 | |

| 5 | dick-preston | Tim Isbister (FOI, Sweden) | 0.803 | 0.793 | 0.818 | 0.806 | |

| 6 | borat-sagdiyev | Niko Palić, Juraj Vladika, Dominik Čubelić, Ivan Lovrenčić, Jan Šnajder, Maja Buljan (TakeLab, University of Zagreb, Croatia) | 0.791 | 0.883 | 0.672 | 0.763 | |

| 7 | morbo | Maja Karasalo, Andreas Horndahl. Magnus Rosell, Fredrik Johansson (FOI, Sweden) | 0.790 | 0.772 | 0.822 | 0.796 | |

| 8 | howard-beale | Ozan Arkan Can, Osman Mutlu (Koç University, Turkey), Erenay Dayanık (University of Stuttgart, Germany) | 0.783 | 0.837 | 0.704 | 0.765 | |

| 9 | ned-leeds | Bozhidar Stevanoski, Sonja Gievska (Ss. Cyril and Methodius Skopje, Macedonia) | 0.775 | 0.865 | 0.653 | 0.744 | |

| 10 | clint-buchanan | Pedro Sandoval Segura, Mehdi Drissi, Vivaswat Ojha, Julie Medero (Harvey Mudd College, United States) | 0.771 | 0.832 | 0.678 | 0.747 | |

| 11 | yeon-zi | Nayeon Lee, Zihan Liu, Pascale Fung (HKUST, Hong Kong) | 0.758 | 0.744 | 0.787 | 0.765 | |

| 12 | tony-vincenzo | Todor Staykovski (Bulgaria) | 0.750 | 0.764 | 0.723 | 0.743 | |

| 13 | paparazzo | Duc-Vu Nguyen, Dang Van Thin, Ngan Luu-Thuy Nguyen (University of Information Technology - Vietnam National University Ho Chi Minh City, Vietnam) | 0.747 | 0.754 | 0.732 | 0.743 | |

| 14 | steve-martin | Youngjun Joo (Yonsei University, South Korea) | 0.745 | 0.853 | 0.592 | 0.699 | |

| 15 | eddie-brock | Antonio Šajatović, David Lozić, Doria Šarić, Filip Boltužić, Jan Šnajder (TakeLab, University of Zagreb, Croatia) | 0.744 | 0.782 | 0.675 | 0.725 | |

| 16 | ankh-morpork-times | Carla Perez-Almendros, Luis Espinosa-Anke, Steven Schockaert (Cardiff University, United Kingdom) | 0.742 | 0.811 | 0.631 | 0.710 | |

| 17 | spider-jerusalem | Amal Alabdulkarim, Tariq Alhindi (Columbia Univerity, United States) | 0.742 | 0.814 | 0.627 | 0.709 | |

| 18 | carl-kolchak | Celine Park, Celena Chen, Jason Dwyer, Julie Medero (Harvey Mudd College, United States) | 0.739 | 0.729 | 0.761 | 0.745 | |

| 19 | doris-martin | Rodrigo Agerri (IXA NLP Group, University of the Basque Country UPV/EHU) | 0.737 | 0.754 | 0.704 | 0.728 | |

| 20 | pistachon | Abdul Saleh1, Alberto Barrón-Cedeño2, Ramy Baly1, Giovanni Da San Martino2, Mitra Mohtarami1, Preslav Nakov2 (1MIT, USA / 2QCRI, Qatar) | 0.729 | 0.724 | 0.742 | 0.733 | |

| 21 | joseph-rouletabille | Jose G Moreno, Gilles Hubert, Karen Pinel-Sauvagnat, Yoann Pitarch (IRIT - Université de Toulouse, France) | 0.725 | 0.788 | 0.615 | 0.691 | |

| 22 | fernando-pessa | André Cruz, Gil Rocha, Rui Sousa Silva, Henrique Lopes Cardoso (LIACC and DEI/FEUP, Portugal) | 0.717 | 0.806 | 0.570 | 0.668 | |

| 23 | pioquinto-manterola | Ted Pedersen, Saptarshi Sengupta (University of Minnesota, United States) | 0.704 | 0.741 | 0.627 | 0.679 | |

| 24 | miles-clarkson | Chiyu Zhang, Arun Rajendran, Muhammad Abdul-Mageed (The University of British Columbia, Canada) | 0.683 | 0.745 | 0.557 | 0.638 | |

| 25 | xenophilius-lovegood | Albin Zehe, Andreas Hotho, Lena Hettinger, Christian Hauptmann, Stefan Ernst (University of Würzburg, Germany) | 0.675 | 0.619 | 0.914 | 0.738 | |

| 26 | orwellian-times | Jürgen Knauth (University of Göttingen, Germany) | 0.672 | 0.654 | 0.729 | 0.690 | |

| 27 | tintin | Yves Bestgen (CECL - UCLouvain, Belgium) | 0.656 | 0.642 | 0.707 | 0.673 | |

| 28 | d-x-beaumont | Jake Palanker, Evan Amason, Mary Clare Shen, Julie Medero (Harvey Mudd College, United States) | 0.653 | 0.597 | 0.939 | 0.730 | |

| 29 | jack-ryder | Daniel Shaprin (Sofia University, Bulgaria) | 0.646 | 0.646 | 0.646 | 0.646 | |

| 30 | kermit-the-frog | Talita Rani Anthonio (University of Groningen, Netherlands), Lennart Albertus Mathijs Kloppenburg (Netherlands) | 0.621 | 0.582 | 0.860 | 0.694 | |

| 31 | billy-batson | Tim Kreutz, Stiene Praet, Jeroen Peeters, Walter Daelemans (University of Antwerp, Belgium) | 0.615 | 0.568 | 0.962 | 0.714 | |

| 32 | peter-brinkmann | Michael Färber (University of Freiburg, Germany), Agon Qurdina (University of Prishtina, Kosovo), Lule Ahmedi (University of Prishtina, Kosovo) | 0.602 | 0.560 | 0.955 | 0.706 | |

| 33 | anson-bryson | Cordelia Stiff, Julie Medero (Harvey Mudd College, United States) | 0.592 | 0.720 | 0.303 | 0.426 | |

| 34 | sarah-jane-smith | Vijayasaradhi Indurthi (IIIT Hyderabad, India) | 0.591 | 0.554 | 0.933 | 0.695 | |

| 35 | kit-kittredge | Rebekah Cramerus, Tatjana Scheffler (University of Potsdam, Germany) | 0.578 | 0.547 | 0.908 | 0.683 | |

| 36 | brenda-starr | Olga Papadopoulou, Markos Zampoglou, Symeon Papadopoulos, Ioannis Kompatsiaris (ITI-CERTH, Information Technologies Institute, Centre for Research and Technology Hellas, Greece) | 0.575 | 0.542 | 0.971 | 0.696 | |

| 37 | harry-friberg | Peter Sumbler, Nazanin Afsarmanesh, Nina Viereckel, Jussi Karlgren (Gavagai AB, Sweden) | 0.565 | 0.537 | 0.949 | 0.686 | |

| 38 | robin-scherbatsky | Maarten Marx, Alexandra Akut (University of Amsterdam, Netherlands) | 0.551 | 0.542 | 0.662 | 0.596 | |

| 39 | clark-kent | Ramneek Kaur, Baani Leen Kaur Jolly, Viresh Gupta, Tanmoy Chakraborty (IIIT-Delhi, India) | 0.548 | 0.683 | 0.178 | 0.283 | |

| 40 | murphy-brown | Anamika Sen, Jiepu Jiang (Virginia Tech, United States) | 0.529 | 0.518 | 0.822 | 0.635 | |

| 41 | peter-parker | Yuanzhen Lin, Zhiyuan Ning, Ruichao Zhong, Weifeng Su, Jeffson Fong (Beijing Normal University - Hong Kong Baptist University United International College, China) | 0.503 | 0.502 | 0.771 | 0.608 | |

| 42 | john-king | Srijan Bansal, Arsalan Saad, Shayan Shafquat, Nitin Choudhary, Jasabanta Patro (India) | 0.462 | 0.460 | 0.443 | 0.451 |

Labels-by-publisher Leaderboard

| # | Team | Members | Accuracy | Precision | Recall | F1 | Code |

|---|---|---|---|---|---|---|---|

| 1 | tintin | Yves Bestgen (CECL - UCLouvain, Belgium) | 0.706 | 0.742 | 0.632 | 0.683 | |

| 2 | joseph-rouletabille | Jose G Moreno, Gilles Hubert, Karen Pinel-Sauvagnat, Yoann Pitarch (IRIT - Université de Toulouse, France) | 0.680 | 0.640 | 0.827 | 0.721 | |

| 3 | brenda-starr | Olga Papadopoulou, Markos Zampoglou, Symeon Papadopoulos, Ioannis Kompatsiaris (ITI-CERTH, Information Technologies Institute, Centre for Research and Technology Hellas, Greece) | 0.664 | 0.627 | 0.807 | 0.706 | |

| 4 | xenophilius-lovegood | Albin Zehe, Andreas Hotho, Lena Hettinger, Christian Hauptmann, Stefan Ernst (University of Würzburg, Germany) | 0.663 | 0.632 | 0.781 | 0.699 | |

| 5 | yeon-zi | Nayeon Lee, Zihan Liu, Pascale Fung (HKUST, Hong Kong) | 0.663 | 0.635 | 0.766 | 0.694 | |

| 6 | miles-clarkson | Chiyu Zhang, Arun Rajendran, Muhammad Abdul-Mageed (The University of British Columbia, Canada) | 0.652 | 0.612 | 0.832 | 0.705 | |

| 7 | jack-ryder | Daniel Shaprin (Sofia University, Bulgaria) | 0.645 | 0.600 | 0.869 | 0.710 | |

| 8 | bertha-von-suttner | Ye Jiang, Johann Petrak, Xingyi Song, Kalina Bontcheva, Diana Maynard (University of Sheffield, United Kingdom) | 0.643 | 0.616 | 0.762 | 0.681 | |

| 9 | howard-beale | Ozan Arkan Can, Osman Mutlu (Koç University, Turkey), Erenay Dayanık (University of Stuttgart, Germany) | 0.641 | 0.606 | 0.806 | 0.692 | |

| 10 | eddie-brock | Antonio Šajatović, David Lozić, Doria Šarić, Filip Boltužić, Jan Šnajder (TakeLab, University of Zagreb, Croatia) | 0.631 | 0.681 | 0.491 | 0.571 | |

| 11 | sally-smedley | Shota Sasaki1, Kazuaki Hanawa1, Tsubasa Tagami1, Hiroki Ouchi1,2, Jun Suzuki1,2, Kentaro Inui1,2 (1Tohoku University / 2RIKEN AIP, Japan) | 0.625 | 0.640 | 0.571 | 0.603 | |

| 12 | murphy-brown | Anamika Sen, Jiepu Jiang (Virginia Tech, United States) | 0.623 | 0.615 | 0.659 | 0.636 | |

| 13 | tom-jumbo-grumbo | Chia-Lun Yeh, Nava Tintarev (TU Delft, Netherlands), Babak Loni, Anne Schuth (De Persgroep) | 0.619 | 0.592 | 0.762 | 0.667 | |

| 14 | sarah-jane-smith | Vijayasaradhi Indurthi (IIIT Hyderabad, India) | 0.612 | 0.586 | 0.765 | 0.664 | |

| 15 | pistachon | Abdul Saleh1, Alberto Barrón-Cedeño2, Ramy Baly1, Giovanni Da San Martino2, Mitra Mohtarami1, Preslav Nakov2 (1MIT, USA / 2QCRI, Qatar) | 0.608 | 0.638 | 0.499 | 0.560 | |

| 16 | morbo | Maja Karasalo, Andreas Horndahl. Magnus Rosell, Fredrik Johansson (FOI, Sweden) | 0.601 | 0.587 | 0.679 | 0.630 | |

| 17 | fernando-pessa | André Cruz, Gil Rocha, Rui Sousa Silva, Henrique Lopes Cardoso (LIACC and DEI/FEUP, Portugal) | 0.600 | 0.585 | 0.681 | 0.630 | |

| 18 | steve-martin | Youngjun Joo (Yonsei University, South Korea) | 0.597 | 0.625 | 0.483 | 0.545 | |

| 19 | borat-sagdiyev | Niko Palić, Juraj Vladika, Dominik Čubelić, Ivan Lovrenčić, Jan Šnajder, Maja Buljan (TakeLab, University of Zagreb, Croatia) | 0.592 | 0.644 | 0.412 | 0.502 | |

| 20 | kermit-the-frog | Talita Rani Anthonio (University of Groningen, Netherlands), Lennart Albertus Mathijs Kloppenburg (Netherlands) | 0.589 | 0.575 | 0.681 | 0.623 | |

| 21 | ankh-morpork-times | Carla Perez-Almendros, Luis Espinosa-Anke, Steven Schockaert (Cardiff University, United Kingdom) | 0.588 | 0.646 | 0.389 | 0.486 | |

| 22 | ned-leeds | Bozhidar Stevanoski, Sonja Gievska (Ss. Cyril and Methodius Skopje, Macedonia) | 0.573 | 0.546 | 0.857 | 0.667 | |

| 23 | orwellian-times | Jürgen Knauth (University of Göttingen, Germany) | 0.537 | 0.530 | 0.658 | 0.587 | |

| 24 | paparazzo | Duc-Vu Nguyen, Dang Van Thin, Ngan Luu-Thuy Nguyen (University of Information Technology - Vietnam National University Ho Chi Minh City, Vietnam) | 0.530 | 0.530 | 0.541 | 0.535 | |

| 25 | robin-scherbatsky | Maarten Marx, Alexandra Akut (University of Amsterdam, Netherlands) | 0.524 | 0.822 | 0.062 | 0.116 | |

| 26 | clark-kent | Ramneek Kaur, Baani Leen Kaur Jolly, Viresh Gupta, Tanmoy Chakraborty (IIIT-Delhi, India) | 0.519 | 0.565 | 0.170 | 0.261 | |

| 27 | dick-preston | Tim Isbister (FOI, Sweden) | 0.514 | 0.520 | 0.352 | 0.420 | |

| 28 | peter-brinkmann | Michael Färber (University of Freiburg, Germany), Agon Qurdina (University of Prishtina, Kosovo), Lule Ahmedi (University of Prishtina, Kosovo) | 0.497 | 0.496 | 0.344 | 0.406 |

Task Committee